We can't find the internet

Attempting to reconnect

Something went wrong!

Attempting to reconnect

Analysis Summary

Worth Noting

Positive elements

- This video provides a concise and accurate warning about 'error compounding' in LLMs, which is a well-documented technical phenomenon in automated software engineering.

Influence Dimensions

How are these scored?About this analysis

Knowing about these techniques makes them visible, not powerless. The ones that work best on you are the ones that match beliefs you already hold.

This analysis is a tool for your own thinking — what you do with it is up to you.

Related content covering similar topics.

Java Performance Update: From JDK 21 to JDK 25

Java

Reading 40 Software Books and Comparing Them All Head to Head!

Book Overflow

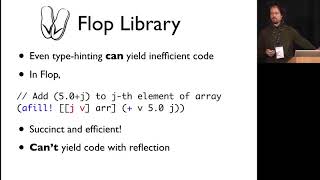

Why Prismatic Goes Faster With Clojure Bradford Cross

Zhang Jian

Sr. Engineer hearing about "Claude Code"

Kai Lentit

Build to Last — Chris Lattner talks with Jeremy Howard

Jeremy Howard

Transcript

When you get spaghetti code, it's typically when an agent has maybe gone on and built something for multiple iterations, maybe multiple features, [music] and it just started kind of like going off the rails a little bit. One bad thing that they're really good at is agents [music] can compound errors very quickly. If an agent has one misunderstanding in a code, and then [music] it sees that misunderstanding that it created in step one, it can double down and create another error in step two, [music] it'll magnify it. The most important thing is like making sure that the first thing that the agent sees is completely robust [music] and it's completely airtight in terms of design, in terms of testing, in terms of like the build, like a lot of these like kind of core parts of the [music] codebase itself before you even think about the agent.

Video description

AI agents are great until they turn your codebase into a bowl of spaghetti. In this video, we’re breaking down why AI agents often "double down" on their own mistakes. If there’s one tiny misunderstanding in step one, the agent doesn't just miss it—it magnifies it in step two. Before you know it, you're looking at a mountain of technical debt. The secret? Don't let the agent start until your foundation is airtight. Focus on robust design and testing first, or prepare to spend your weekend debugging AI-generated chaos. EO stands for Entrepreneur& Opportunities. As we're looking to feature more inspiring stories of entrepreneurs all over the world, don't hesitate to contact us at partner@eoeoeo.net LinkedIn | @EO STUDIO X | @eostudi0