We can't find the internet

Attempting to reconnect

Something went wrong!

Attempting to reconnect

Dreams of Code · 174.2K views · 7.9K likes

Analysis Summary

Worth Noting

Positive elements

- This video provides high-quality, practical demonstrations of modern Rust-based alternatives to classic Unix utilities like grep and find.

Be Aware

Cautionary elements

- The use of 'AI agents' as a justification for learning these tools is a topical hook that may overstate the actual connection between CLI utilities and AI workflows.

Influence Dimensions

How are these scored?About this analysis

Knowing about these techniques makes them visible, not powerless. The ones that work best on you are the ones that match beliefs you already hold.

This analysis is a tool for your own thinking — what you do with it is up to you.

Related content covering similar topics.

See your entire year in HEY Calendar

37signals

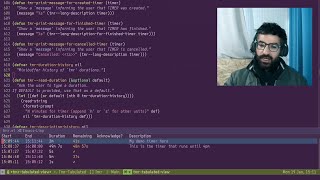

Emacs: easily set timers with TMR

Protesilaos Stavrou

Emacs Goodies - #7 Proced Mode

Goparism

AI Revisited - part 2 – REWORK

37signals

This New Claude Code Feature is a Game Changer

Nate Herk | AI Automation

Transcript

When it comes to writing code, I've been working in the terminal now for just over 10 years, and it's perhaps one of the best decisions I've ever made. Not only has it improved my own productivity when it comes to developing software, but given the recent rise of AI agents such as Clawude Code and Gemini CLI, uh, both of which operate inside of the command line, then having this terminal-based workflow is perhaps now more important than ever before. By default, however, the terminal isn't exactly the most hospitable place, especially if you're coming from a more fullfeatured IDE or text editor such as Jet Brains, VS Code, or even Cursor. Therefore, given that more and more people are likely going to be using the terminal in the near future, I thought it would be worthwhile sharing some of my favorite CLI tools that I've picked up over the past 10 years, which have not only helped to improve the experience of working in the terminal, but have also helped to make me even more productive. To kick things off, let's start with one of my favorite CLI tools that I picked up in the past couple of years. Z Oxide, which is a drop-in replacement for the CD command, one that makes navigating your file system from the terminal extremely efficient. To show how it works, let's first take a look at how I would normally change into a directory via the CLI, which is by using the cd command. Here I want to change into a project I'm currently working on found inside of my home folder/p projects/zenhq/studio/studio app which as you can see is kind of a tedious path. By using the cd command I have to type out the full path including any symbols which as I mentioned is somewhat tedious and can be errorprone making navigating your file system in the terminal a little less fun. So let's take a look at how we can achieve the same thing using Z Oxide which in my case I've set to the command of Z. To change into the same directory rather than typing out the full path including the symbols I can instead just pass in the following path of projects zenhq studio app and it'll take me to the exact same directory. As you can see this requires far less typing to achieve the same thing. However, we can actually optimize this even further as I can reduce this path down to just Z studio app and it'll again take me to the exact same directory. Very cool. The way that Zoxide works is by performing fuzzy matching on the arguments you pass in to the path of any directories you've already visited using Zoxide. This means you don't need to type out the exact path in order for it to match, which can help to save a lot of time when jumping between different projects and directories. So, for example, if I want to jump to my Neovim configuration, rather than typing in cd.comconfig/neovim, I can instead just type in cdconfig. Or if I want to head over to my Dreams of Code web application, I can just do cd dreams of code. Additionally, you'll notice that I'm using the CD command now instead of Z, which is something you can configure yourself. In doing so, it allows you to use CD as you normally would, but with the added benefits provided by Z Oxide. If you're interested in learning more about how Zoxide works, including some of the caveats that are associated with it and how to configure it for your own system, I've actually done a dedicated video looking at Zoxide in more detail, which you can find a link to in the description down below. Okay, so this next tool is one that I found to be incredibly useful when it comes to working in a code base you might be unfamiliar with and given the rise of a Gentic AI is more and more likely to be the case. This command is rg, which is the shorthand for rip grep, an improved version of grep. If you're not too sure what grep is, it's basically a CLI command that allows you to search for occurrences of text patterns inside of files in a directory, which makes it incredibly useful when searching for code inside of a project. For example, here I'm using it to search for any instances of user from request inside of the orly project I created for my last video. The GP command itself comes as standard in modern Unix based operating systems, but it's not exactly what I would call a modern command, especially given how slow it actually is. For example, here I'm searching for all instances of is admin inside of my Dreams of Code web app, which as you can see is not only taking a rather long time, but it's also searching in directories I don't really care about, such as the node modules directory. This is where rip grep brings a number of different improvements to the gp command. The first of which is that rip gp is fast af. For example, if I go ahead and search for instances of isadmin again inside of my dreams of code web app, you can see this time by using rip gp or rg, it's significantly faster than using its counterpart command. This increase in speed not only helps to both improve the way that I work, but it also reduces the risk of my brain drifting onto something else whilst waiting for the results to come back. Additionally, you'll also notice that I'm no longer getting results from the node modules folder. This is because rip grep comes with a bunch of defaults that make it much easier to work with and helps to improve one's sanity. One of these sane defaults is that rip grep won't search any files or directories that are listed in a project's ignore, which makes it much easier to only see results you care about. You can of course disable this behavior if you ever want to search in ignored files or directories such as your node modules, but most of the time I find that I rarely do. In addition to this, another sane default that RIPG provides over its legacy counterpart is that it'll automatically search through all files and directories recursively. Whereas in GP, this requires the -ash r flag in order to do so. Whilst this isn't too difficult to add to a GP command, it's these small changes that make RG much more userfriendly in my experience. Another same default that RIPG grap has is color enabled by default, which in my opinion makes it a lot easier to see the actual results. Now again with GP, you can enable this, but it requires setting yet another flag. All of this makes rip grep a much more useful command when working with larger projects and even just working in your entire file system which again makes me much more effective when working in the terminal. So this next tool continues the trend of improving legacy CLI commands this time the find command which as the name implies is used to find files. In this case that tool is fd which is to find as rip gp is to gp bringing about a number of similar improvements. However, rather than searching for files that contain a string, find is used to search for files with a certain name. For example, let's say I want to list all of the files that have the word orth in their file name inside of my Dreams of Code web application. With the find command, this looks as follows. Specifying the directory I wish to search in, which in this case is the current directory denoted by the dot syntax, followed by the type flag with the f parameter, meaning I want to search for files instead of directories. and lastly passing in the name of the file I wish to search for using the name flag which in this case I'm searching for names that contain the word orth using the following glob syntax. Whilst this works you can see that again it's kind of slow and also produces results that I don't care too much about again inside of my node modules. So let's go ahead and compare this to the fd command to see what improvements it brings. In order to do so, all I have to do is use the FD command with the string of orth, which already is a much cleaner interface. Upon running it, you can see it's also a lot faster and doesn't produce any results found inside of my node modules, which is listed in my project's.git ignore. Whilst all of these improvements are incredibly welcome, perhaps the biggest improvement that I personally find when it comes to the fd command is that it's just a lot more intuitive to use. For example, let's say I want to find all of the files inside of this directory that contain the word test, but I want to exclude those that contain the word route. To do this with FD is as simple as using the d-exclude flag, which again is rather intuitive to use. However, if we go ahead and compare this to the find command, you can see it's nowhere near as intuitive and requires us again to make use of multiple different symbols, which as I mentioned back in Zoxite is something I like to avoid. Additional improvements that the FD command brings includes both reg X and Glob support as well as case insensitive search which is enabled by default. Because of these improvements both to performance and usability, then I find that I end up using FD way more than I ever used find which has helped me to better find files on my system. So this next command is the big dog. perhaps having one of the biggest improvements to the way that I work in the terminal of all time, T-Max, [snorts] which I've actually done a whole video on in the past talking about how it's forever changed the way that I write code. In case you haven't seen that video or you're unaware of what T-Max is, T-Mux is a terminal multipplexer, meaning that it allows you to spawn multiple pseudo terminals and arrange them either as panes, individual windows, or even in multiple sessions, each of which you can navigate between. [snorts] Now, you may be thinking, why not just use native tabs and splits found in some terminal emulators such as Kitty or Ghosty? Well, you can, but T-Max provides a number of different benefits that you just don't get when using these applications. For starters, it's entirely keyboard-based, which if you're using the terminal is something you really should be optimizing for. This means that it forces me to keep my hands on the keyboard in order to navigate around different panes, windows or even switching sessions, which helps me to keep my focus and therefore improve my productivity. Of course, some terminal emulators also allow for keyboard navigation. However, another benefit of T-Mux is that it happens to be app agnostic, meaning that if I ever did want to one day change my terminal emulator from elacrity to something else, then I wouldn't have to relearn any keyboard shortcuts or bindings in order to be able to navigate my terminal environment. Additionally, because T-Max has a CLI interface, then it allows you to perform automation of various different actions, such as being able to create a new window whilst also changing into a subsequent directory. This comes in handy when you decide to level up your terminal-based workflow. In my case, I've added in these automations into my own Vibe CLI application, which I created to help me vibe code more effectively. For example, if I go ahead and run the following Vibe command, you can see it opens up a new T-max window with a new git work tree inside and also happens to open up clawed code, allowing me to open up a brand new agent to work on a new feature, all inside of a fresh session that won't interfere with my existing code. Perhaps the biggest benefit that I find of using T-Mox, however, is when it comes to session persistence, in which sessions are able to be reattached to in the event a window or a connection is ever closed. This session persistence not only comes in handy when working on my local machine, but it's also really advantageous when it comes to accessing remote machines over SSH, be it another computer on my home network, such as my workstation, or when working on a VPS. To show what I mean, let me quickly SSH into a VPS instance that I have running already, which is actually powered by the sponsor of today's video, Hostinger. I'll talk about Hostinger more in a second. But for the moment, now that I'm sshed in, let's go ahead and load T-Mux on the server, followed by starting a long running task, which in this case is HTTOP. By the way, if we take a look at HTTOP, you'll notice that this VPS instance has 8 gigs of RAM. And no, that's not a typo. Hostinger specialize at providing lowcost VPS instances that have a lot of available memory, which makes them great for running multiple services at the same time. For reference, the current instance that I'm using is the KVM2, which is what I use to run the majority of my services on. The KVM2 provides two vCPUs and a massive 8 gigs of RAM, all for the low cost of $6.99 a month when you purchase a 24-month term. Yeah, it's ridiculously affordable. If that wasn't good enough, however, you can actually get this price even lower. To do so, all you need to do is click the link in the description down below and use my coupon code dreams of code when you make a purchase, which will get you an additional 10% off the already low price. So, to get your own VPS instance to deploy pretty much all of your services onto, then visit hostinger.com/dreamsofcode using the link in the description down below. And make sure to use my coupon code dreams of code to get that additional 10% off. A massive thank you to Hostinger for sponsoring this video. Okay, so let me show you how we can use T-Max to reattach to a running session following a disconnect. If I go ahead and press the following keyboard combination in order to force disconnect SSH, followed by then sshing in back to the server, I can now reattach to my existing T-Max session using the following T-max a command, which as you can see brings me back straight into hop. This is really great if you happen to be performing any longunning tasks such as running scripts or other processes that you don't want to be killed if your SSH connection drops. One situation that I find T-Mox to be really useful is when it comes to the initial setup on a VPS, as it allows me to reattach to my session and pick up where I left off in the event that I'm accidentally disconnected, which can sometimes happen when making changes to SSH security. As I mentioned, T-Max has had perhaps the biggest impact to the way that I work in the terminal. So much so that I actually did an entire video talking about how you can configure it, as well as a little bit of a tutorial. If you're interested, you can find a link to that video in the description down below. So, this next CLI tool is actually a rather recent addition to my command line toolkit. However, despite only being in it for a short amount of time, I found it already to be a huge improvement to my current workflow. This is the GitHub CLI, which I've been using more than the actual GitHub website itself. If you're unfamiliar with the GH CLI, it basically allows you to perform many of the actions you would typically take on github.com, but without the need of ever leaving the terminal, which is something I really appreciate, especially as every time I open a web browser, it's basically a dice roll to determine whether or not I'll be able to keep my focus or that I'll get distracted and fall into a social media black hole. For me, some of the biggest uses I find of the GitHub CLI include creating new repositories for any new projects I get started with, checking for any open issues for existing projects, and perhaps my favorite recent use case, using it with a Gentic AI to automatically create pull requests with well-written descriptions. This not only helps to overcome what I think is some of the most tedious parts of developing software, anything that's basically summarizing the work you've done already, but thanks to Docboy, being able to create pull requests more easily allows me to automatically create review apps that I can use to check my changes against. All of this to me makes the GitHub CLI incredibly valuable when it comes to a terminal based workflow in 2025. Whilst we're on the subject of CLI for web applications, another tool I've been using a lot recently is the Doppler CLI. If you're unaware of what Doppler is, it's basically my favorite secrets management platform, having discovered it about 3 years ago. I'll do a dedicated video talking about Doppler in more detail another time, but the general idea is that it allows you to easily add secrets into projects for different environments, including dev, prod, and even personal ones as well. This is not only incredibly useful when it comes to application deployment, but thanks to the CLI, you can also use Doppler to replace any files found on your local system. For example, if I want to go ahead and run a local version of my Dreams of Code website, but with all of the environment variables for my dev environment loaded, then I can just go ahead and run the following Doppler run command, passing in the project, which in this case is dreams of code, and the configuration, which is dev. Then all I need to do is add in the following double dashes and use the bun rundev command. And with that, I have a local version of my Dreams of Code website, but running on my dev environment. By doing so, it means that I can get all of the secrets that I need for a development environment of my application, but without having to store them on my actual disc inside of AEMV, which is something I always feel a little weird about, especially when using a Gentic AI, which can sometimes check your EMV files for you. And that's not even considering the recent examples we've seen of AI accidentally dropping prod. Personally, I find that using the Doppler CLI not only adds an additional layer of security when working on my own projects, but it's also incredibly valuable when working as a team. So much so that I'll likely do a dedicated video on it in the near future. Whilst we're on the subject of managing both secrets and sensitive values, another CLI tool that I found to be invaluable for working with sensitive data is Pass, also known as password store. Pass describes itself as the standard Unix password manager, although it doesn't actually come installed by default on Unix systems. Personally, however, I like to describe it as the most perfect password manager for the terminal, making use of open source technologies such as GPG and Git, allowing you to effectively self-host your own passwords. Personally, I like to use Pass when it comes to managing both API tokens as well as database URLs if I don't store them inside of Doppler. By doing so, I can easily use pass to load these in either as CLI arguments or setting them as environment variables, all without ever having to expose the actual secrets. This is done by using either the pass or pass show command as follows. Here I'm using it to set the open AI API token environment variable. And here I'm using it with psql in order to access my prod database, all without ever exposing the actual secret value or writing it to my shell's history. Additionally, you can also use password store as a normal password manager by adding in the - C flag, which will go ahead and copy the password to your clipboard, which you can then paste into wherever you need to. In addition to this, because password store uses git under the hood, then it means that every version of your secret is tracked, and it also allows you to push these secrets up to a remote repo. Now, that may sound kind of scary, but because these secrets are encrypted using GPG, then it's effectively incredibly secure, provided you look after your GPG keys, which in my case are managed using a UB key, meaning that it's pretty much impossible to get these out. [snorts] This has the additional benefit that I'm able to easily clone all of these passwords and secrets onto another machine securely by cloning down the remote repo and then using my UB key with it, which requires me to pass in my PIN. Once I take the UB key away, then these secrets are no longer accessible. Personally, I find that using password store makes working with both secrets and passwords in the terminal a lot easier, especially if it's for more ad hoc tasks and commands rather than for dedicated projects, which is what I tend to use Doppler for. If you're interested in finding out more about how you can set up password store yourself, then I've done a whole video on this already, which you can find linked in the description down below. Next up, we have JQ, which I feel is pretty much a staple for any backend/ fullstack developer. If you're not too sure what JQ is, it's effectively a tool to make working with JSON data way more effective. By default, it'll automatically format any JSON that you pass in via standard input, making it easier to read. However, this only really scratches the surface of what you can do. This is because by making use of JQ filters, you can both pull out values from the JSON data and perform transformations on them. For example, here I have an API that returns a list of sales in JSON. By using JQ with the following filter, I can aggregate the total value of these sales into a single result. Very cool. Because of these filters, it makes jQ really useful when it comes to things such as debugging and automation, allowing you to easily work with JSON data inside of shell scripts. Personally, I think that JQ is one of those tools that should be part of every web developer's toolbox, even though the syntax itself can be somewhat tricky to learn. However, by doing so, I feel like it pays off in dividends. Okay, so the next CLI command that has improved the way that I work in the terminal is of course stow, which is what I use for managing my dot file configurations. The way stow works is that it allows you to create symbolic links for any of the files inside of a directory, which makes it great for storing all of your dot files inside of a repository and then using stow to effectively place them wherever you need them to be on your system, be it in the root of your home folder or inside of a directory inside of theconfig. This allows you to then effectively use git in order to version control your dot files which makes it great for reusing across multiple machines or even on a VPS instance if you desire. This also has the benefit of reducing the amount of time you spend configuring which I know is difficult for us who like to work in the terminal. But by having it version controlled, we only need to configure it once and then it will be shared across all of our machines. I've done a whole video on Stow on my second channel which goes into much more detail about how it works and how you can set it up for your own dot file configurations. One thing to note, however, is that recently I've actually been using a different tool to manage my dot files, home manager, which works really well when it comes to both Nixos and Nyx Darwin. Despite this, however, I actually don't use Home Manager for all of my dot configs, as I still find that the way that GNU Stow works with symbolic links turns out to be superior in some situations. So now I kind of use both. I have a video in the works talking about Nyx OS and how I use Home Manager, which has been planned for the future. So if that's something of interest, then let me know in the comments down below and I'll bump it up the list probably after I've done a video on why I no longer use Arch. Uh, by the way, the last but certainly not least tool on this list is one that I find incredibly useful, but not by itself. This command is FCF or FZF if you happen to speak the Queen's English. RIP Lizzy. If you're unaware what FCF is, it's basically a fuzzy finding tool for the command line, allowing you to search across inputs in a really efficient way. If I go ahead and run the FCF command, you can see that it opens up a list of all of the files inside of my current directory, which I can then go ahead and start typing, and it will filter these to whatever I've typed in a fuzzy way. Then upon finding the file that I want to select, if I go ahead and press the enter key, you can see it prints it out to the console. As I mentioned, this by itself isn't exactly the most impressive thing. However, the real power behind FCF is when you couple it with other commands. For example, in my own shell setup, I have FCF enabled with Zshell, which means it works for all of my tab completions. One such example is when it comes to password store. So let's go ahead and say I want to load the database URL environment variable with a value from password store. However, I can't remember the path to that actual password itself. Fortunately, I can just press the tab key and it will load up the FCF window for me, allowing me to interactively search through all of my passwords to select the correct one. In [clears throat] addition to extending CLI commands, FCF also works really well when it comes to building CLI applications. For example, in my own Dreams of Code CLI, I use FCF to interactively select lessons inside of my course when it comes to making edits or uploading videos, which I can do by either typing out the lesson/module number or just typing out the lesson slug. Using FCF as a sort of interactive mode for CLI applications is perhaps my favorite use case. In fact, I like doing it so much that I actually made a whole lesson on my CLI applications and go course, which is available for you to purchase if you're interested. As with everything that I've mentioned in this video, there's a link in the description down below. In any case, that wraps up 10 CLI tools that have improved the way that I work when it comes to working in the terminal. Of course, these aren't the only 10 tools that I use inside of the CLI, but they are perhaps the ones that I would say have had the biggest impact recently. That being said, if you want to know about some other tools that I'm using, then let me know in the comments down below and I'll do a part two video to this one. In any case, I want to give a big thank you to the sponsors of today's video, Hostinger. If you want to have a VPS instance to host all of your services on or just to tinker with, then Hostinger is a great and affordable option. So, make sure to use the link in the description down below and use my coupon code dreams of code to get an additional 10% off. Otherwise, I want to give a big thank you to you for watching and I'll see you on the next one.

Video description

To get your own VPS instance - visit https://hostinger.com/dreamsofcode and make sure to use my coupon code DREAMSOFCODE for an additional 10% discount. I've been working in the terminal now for just over 10 years, and so I thought I'd share some of my favorite cli applications that I've picked up over that time that have helped to improve the way I work in the terminal. Links: - Zoxide Video: https://youtu.be/aghxkpyRVDY - Tmux Video: https://www.youtube.com/watch?v=DzNmUNvnB04 - Stow Video: https://www.youtube.com/watch?v=y6XCebnB9gs - Pass Video: https://www.youtube.com/watch?v=FhwsfH2TpFA - CLI Applications Course: https://dreamsofcode.io/courses/cli-apps-go/ My Gear: - Camera: https://amzn.to/3E3ORuX - Microphone: https://amzn.to/40wHBPP - Audio Interface: https://amzn.to/4jwbd8o - Headphones: https://amzn.to/4gasmla - Keyboard: ZSA Voyager Join this channel to get access to perks: https://www.youtube.com/channel/UCWQaM7SpSECp9FELz-cHzuQ/join Join Discord: https://discord.com/invite/eMjRTvscyt Join Twitter: https://twitter.com/dreamsofcode_io 00:00:00 Intro 00:00:57 App 0 - zoxide 00:03:12 App 1 - rg 00:06:07 App 2 - fd 00:08:28 App 3 - tmux 00:13:22 App 4 - gh 00:14:43 App 5 - doppler 00:16:28 App 6 - pass 00:18:49 App 7 - jq 00:19:55 App 8 - stow 00:21:32 App 9 -fzf