We can't find the internet

Attempting to reconnect

Something went wrong!

Attempting to reconnect

Analysis Summary

Worth Noting

Positive elements

- This video provides a highly detailed explanation of dependency graph traversal and the rationale behind moving from artifact-based to source-based workflows in Clojure.

Be Aware

Cautionary elements

- The speaker frames the limitations of previous community-standard tools (Leiningen, Boot) as fundamental flaws to build consensus for the new official toolset.

Influence Dimensions

How are these scored?About this analysis

Knowing about these techniques makes them visible, not powerless. The ones that work best on you are the ones that match beliefs you already hold.

This analysis is a tool for your own thinking — what you do with it is up to you.

Related content covering similar topics.

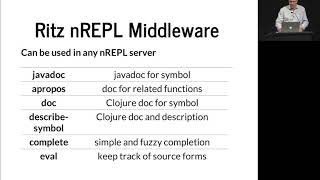

Ritz, The Missing Clojure Tooling Hugo Duncan

Zhang Jian

The Taming of the Deftype Baishampayan Ghose

Zhang Jian

The Data Reader's Guide to the Galaxy Steve Miner

Zhang Jian

Real World Clojure Doing Boring Stuff With An Exciting Language Sean Corfield

Zhang Jian

One to Rule them All Aaron Bedra

Zhang Jian

Transcript

[Applause] everyone so I am Alex Miller obviously so today I'm gonna talk about compostable tools specifically about tools depths and some of the things I've been working on with that for the last couple years I I gave a talk two years ago gyro closure about tools deafness is prior to release and on in that talk I mentioned these three problem focus areas that we that we had when we start first started talking about tools depth so I'm just repeating him here I'm not gonna repeat the whole talk about why we're working on those things um you can go watch that talk you know but I'm gonna skip through all that stuff but I'm gonna briefly mention a few of things so in terms of version resolution Richard talked a couple years ago about called speculation that was about semantic version and the problems of semantic version that's one issue the issue of breakage and how to turn breakage into growth and then issues around version selection and how what you test it like if you're testing a library with a particular set of versions and then you use that library in combination with other things in your application you're almost certainly not using the set of versions that you tested with so a lot of that version selection is actually a lie so so those are some of the version resolution related issues another big one was artifact focus so many years ago people did the hard work hey Phil hey Goldberg and and Chaz Emmerich in particular did a lot of critical work to make the Java library ecosystem available to closure through pomegranate and through line again and and those sorts of libraries and that was amazingly useful unfortunately the downside of that is that while we gained access to the art all this artifact ecosystem from Java we also sort of gave up a little bit of what makes closure special which is that it's a source source focused language so you don't actually need artifacts to start grabbing closure code using closure code so we wanted to kind of reclaim a little of that territory and be able to you know use dependencies the code that already lives and get like the files the source files out there we can just use it directly and then we initially release us with 1.9 which is when spec was introduced so at that point closure actually had a couple of dependencies that were built into the language and so from the very first moment that closure users are using closure they are have to resolve transitive dependencies and and somehow to be able to make class path and start the JVM and and do work so we didn't think it was really fair to to add that on without also trying to address some of those problems so the idea was to sort of address a little bit of that getting started experience and provide a way to run closure programs that would that would help out so the result of that was tools depths which was a library to construct class paths and clj or closure which are tools to build class paths and run programs and that was just almost exactly two years ago and since then we've continued to work on it there's been about 40 releases since then of those the library and the tool currently it's at about 6,000 downloads per month for brew which is the Mac package installer obviously people are using on Linux and windows and things like that as well so it's a little hard to tell exactly how much people are using it but that number continues to go up so I think people are you know continuing to adopt it and and use it for things additionally there's been somewhere around 30 different tools that have been built that work on top of deps Eden or closure the clj tool so people are continuing to pick it up and use it for things which has been which has been great seat because that's kind of what we wanted is to build this sort of ecosystem so and I'll talk more about that at the end so I'm gonna go and do a little bit of a sort of the details of how tool steps work works and then how clj works on top of it and then kind of pull out to some other things at the end so the idea with tools depths is that sir data and this is something that Lanigan kind of got right in having depths inside the project clj file there are some downsides to that in lining it which is that the project sealed a file is evaluated so there are a lot of cases where people have you know code that's embedded inside their depth so they're not truly data and that's something that's problematic and we've really fought hard to avoid stepping into that so I'm sure people who work on tool steps I've seen me say no to things like putting variables into the depths and various things like that we're gonna continue resisting that for as long as we can and as many ways as we can so some of the pieces that are all sort of involved in tools depths are having some declarative depths as data so that's the depth seat and file being able to do transitive dependency resolution when you encounter different versions of a particular library in your transitive graph being able to select the right the right one whatever right means being able to modify that dependency set for different uses use cases that you have in your project so you know I need to swap out a different closure version or something like that class path building just making class paths that I'm gonna use to actually run something and then having sort of late class path modifications where I need to swap out something or add something at the end and then also we have a sort of an extensible dependency procurer system so we originally launched with the ability to get access to maven depths and maven repositories and also local depths but then we've added get depth on since then and there's been a variety of little things added since then so a def seed file this is just an example probably a lot of people have seen these so I'm not gonna belabor it too much but it has sections in it for the local paths that you want to include on the class path for your dependencies this is your route set of dependencies and then for aliases that can combine a bunch of different modifications that you might want to make to your to your class path when you're doing some tasks so importantly this is plain eden there's no evaluation here there's no substitution there's no variables it's just data so you can just slurp it in as an Eden file and do stuff with it and we have a bunch of tools out there that are doing that and it's great that they can do that so dependency traversal this is something that is actually built into maven and if you use any of the other tools in the ecosystem if you're using line again or boot or that those are both used pomegranate which is a library that was originally created by Chaz Emmerich pomegranate calls out to maven uses the maven libraries and so you're using the maven dependency traversal logic so clj and depth tools depths are different in that we actually are doing the dependency traversal rather than using maven sand and the main reason for that is that we're not beholden to mavens idea of what artifacts are in that case we can we are in control of understanding what what our dependency is and what its transitive dependencies are and how to get them and so that that's what lets us you know get access to get and potentially other systems as well to do that but that meant that we had to write that so that is the core bit of logic that's actually inside of tool steps so you need to expand this transitive dependency graph so you go examine a new a new library you find it strands dependencies you then go examine those dependencies and find they're you know and you have to keep going when you run into multiple versions of the same library you need to somehow select one you need to deal with exclusions which turns out to be problematic in a lot of places and this is happening in a in a loop so you also need to make sure that you don't you know run into a cycle you know or go off and hit a stack overflow or do those sorts of things so there's just some care that needs to be taken there so given a simple depths Eden this is one that has just closure and core memorize in it I'm gonna have a series of diagrams here and briefly to explain the diagram so on the top here is the the first dependency diagram and we're I'm sure sort of showing you the expansion here so you're starting from def seaton and you're going to consider a new library and coordinate that you're going that you might want to add to your overall dependency set so here I'm considering closure version 1 10.1 and you will see either a green box or red box green means that we in this step are going to decide to include it red means that we're going to assign not to include it and you'll see a label on the edge that says why are we including it so in this case it says it's a new top dependency and that's called out specifically because the top dependencies are actually special and talked about that a minute but and basically as we consider this we're also going to find new potential children if we decide to include it closure itself has children child dependencies and so we need to put those on a queue to consider next and so this is all built around a queue it's not a recursive algorithm it's an iterative algorithm that's working off of a queue and that allows us to not you know to look at arbitrarily deep dependency trees without hitting Stack Overflow and worrying about that kind of stuff so in this case we include side to include closure we take its child dependencies and throw them on the queue which you can see in these and then the second one we consider core memo eyes which is our other top dependency we say great I'll add that on to and then we start to consider the children of closure so closure depends on both spec and encore specs alpha so we include both of those and you'll see these say not new top dependency but new dependency so we'll just add those on if we find something new generally we just add it and then we will continue looking through poor memorises dependencies and then finally end up in a final dependency tree the one at the bottom there so now that we've got that whole that whole tree then we can pass it to the next step which is go look going put together the jar files from all those different things and the paths from my local DEP seaton file and assemble a class path I'll talk about that a little bit more later so a version selection so if you happen to have have encountered more than one version of a particular library in this traversal you have to choose which one to use so and this is kind of the classic Java dependency health problem so Java works off of a linear class path it's a set of routes that you're going to look in it's gonna look in order across them and find one the classic problem in a Java program is to our JVM program is to have multiple versions of the same library in your class path and get sort of some mismatched combination of the two of them like you know these you've got the older version first and a new version next and the new version has some additional things in it and you can see some of the new one but some of the old one and that sort of thing so we took it as basically and it's basically an error if you try our trying to include two different versions of the same library you have to do something to choose one of them and this goes back to Rich's speculation talk that we we really want to choose the new one and that actually differs from maven maven has a much simpler algorithm and it really is based more on what it encounters first so basically whatever I see first that's what I'll use that's essentially that may have an algorithm from the root of the tree down so we have a little bit different logic in there and and so it is possible for you to end up with a different class path using tool steps than it is through other closure tools in practice it is I'd say is actually relatively rare to see that or if you do see it for it to be a problem so we have you know work through some issues around some of those kinds of things and there are solutions if you are not getting what you want there are alternatives to that and then the other thing that I'm calling here path consistency and I will talk about that a little bit later so if you have a top dependency I mentioned those are special and that's because one of the big hammers you have is like if I'm sitting in a particular project I'm declaring the top level of dependencies that's really my main visibility of saying what I want and so you always have the option at that top level of saying I specifically want this version of the dependency and tool steps is going to honor that so whatever you put at the top level project whatever project you're in right now you're going to get those versions definitively if you get then if you're like a library and then you get included in another application that application may make a different choice so it may choose you know to use a different thing underneath you but you can be guaranteed that at the particular project you're at this is what you're gonna get so this is a midway through one of these expansions and you'll see that there's a tools reader that was a top-level DEP and this was version 1 3 2 and then we also included chorusing which includes tools analyzer JVM which includes tools reader so we're considering this new alternative version of tools reader which is 1 0-0 beta for obviously 1 0-0 beta 4 is less than 1.3 - that's a newer version so you'd probably want the one 3 2 version anyways but in particular here the decisions made to use the top-level version because we've already encountered this dependency at the top and it and the user said use that version so we're gonna use that version so we don't even bother can really considering it we don't do any work looking at it dependency users or doing version comparisons or any of that stuff we just assume that's what you want so that's what we do there and you'll see that this is a little bit redrawn diagram there's actually tools reader now that's dependent on from the top and also from one of the child dependencies so you're now actually not into a tree as much as you are into a graph you can also run into the case where you might have two versions from different sibling transitive dependencies so none of them are top-level dependencies in that case you have to choose what to do so here we encounter tools reader it's the first time we've seen it in this expansion so we include it it's a new dependency and then we might encounter another version of tools reader a different version so before we include we happen to see 1 0 0 beta 4 first here we saw 132 and so we said oh that's a new version so I'm gonna include that one instead and then basically that that prior library is gonna also use that one version and so in any of these expansions you will never see the version of library more than once it'll only in be in there once pack consistency so this is I know it's late in the conference but I'm gonna ask you to participate in something so I'm gonna explain this this scenario a little bit so we've got at the root depth Eden we've got three libraries a B and C those are all there are no conflicts we're gonna use all of those but then underneath those we have a which depends on the 1.0 version of D we have B which depends on the 1.0 version of e which depends on the 2.0 version of D and then we have C which depends on the 2.0 version of e so there are four different possibilities here of which version of D or E we include there's two versions of each we have to decide which one to include so I'm gonna give you one minute actually think about it for one minute and try to decide what you think is the best answer out of these and I spent several days thinking about this so I'm cheating a little bit but exactly that's true so okay so who thinks that it's number one the concluding D version 1 and E version 1 not too many okay who thinks it should be number 2 here which is D version 1 and E version 2 hands for that one what about for number 3 where you have eversion 1 but D version too few hands out there fewer than the last one without number 4 here did D and E both version 1 so I see definitely a few hands there too ok so interestingly this is a place where maven and tool steps are gonna give you different answers so maven as I said really looks at what it happens to run across first so in this case assuming you're walking your top to bottom on these dependencies in a breadth-first search is actually finds d1 first and then e1 second and it's gonna include those two versions so if you go set the situation up and maven and ask it for what the path is that's what you're gonna get Toole steps right now will will give you d version 1 and e version 2 and then number 3 I think is also a reasonable solution so I would contend that number 4 has some downsides it's both it's giving you the oldest version of everything and it's also giving you it's not giving you so the the path consistency part of this is that tools depths will generally not give you a dependency that's not included by some parent so the example of so like a number 4d version 2.0 is dependent on by e version 1.0 but e 1.0 is not included so in general tools depp's will never actually include that an example like that because you're basically at that point including a version of a dependency that nobody is nobody that's included in the final set asked for so that's just seem weird to me to have that so generally I Tools Depp's is biased towards finding paths where all of the things in a in any particular branch are included so that that really narrows it down to two or three and I think the reason the reason that you end up with two instead of three is it was really based on the order that you encounter things it's kind of biased a little more towards things that are at the top so in that case like two is including two of the second-level libraries whereas three is including a second-level library version and a third level library so and it's not let's not explicit but just because of the way that the code is written it tends to do it'll prefer the number to mavin I would also contend it has this weird property that you've included e1 but you haven't included the thing that it actually said it depended on you included an older version of that library which seems likely to be a problem to being so this all gets extra confusing when you include exclusions in there because you can have cases where you depend on a particular library on one version and then you move to the next version you actually exclude that dependency and so you have negative statements in here as well and like in most logic stuff including negation makes things much more difficult so these things can get big one of these is a based on a DEP season that I pulled from a full kuroh full stack app and the other one is from a lunamon luminous example i I don't know that there are canonical examples or anything like that doesn't really matter this gives you an idea of how many dependencies are included in one of these default sort of combination applications and I would encourage you to look at these and and think about whether those things that are five levels down are actually things that you that you actually need in there and consider these a little bit more I think a lot of people just kind of you know keep bed and stuff in there without really thinking about the consequences of all that but that's just my own personal plea you can also get into cases where you have lots of libraries that have shared common uses this is a particular one that was from a ticket that we had in tool steps it's actually the bat tech Java library batik I don't know how to say it but it it has a lot of self inclusion there's all these libraries sub libraries that all depend on each other and they some of them have exclusions so there was a bug around this that related to not properly tracking exclusions and it would just cycle forever so but you can get into these sort of sub areas where there's a lot of really confusing stuff so some of the complications I tend to see like in general this the simple cases are really are simple if you just have a set of things you follow the tree and you include it all it's no big deal and actually a lot of applications or libraries are like that but you can also have these more complicated things and the exclusion sets is definitely a big area where I've seen some some weird things in particular there are some issues right now with including the same library but trying to override it with maven versus to get coordinate and that's someone I hope to track down and solve soon there are also some pathological cases in particularly around exclusions where you can get into some things where I I've got I've seen some cases from people where I really think there's actually no logical solution to this there is no set of versions that actually satisfies all the things that you have said throughout the transitive dependency graph and in that case you'll get an answer you don't pick one but I could also come up with other answers that are also equally as good or bad depending how you say it and then and that's a case where you need a person to step in you need some a human to step in and say that I need to sort out why these things are inconsistent and make a choice probably at some layer in that tree that says I really need to version and then Jackson if anybody's ever used Jackson's the source of 75% of all dependency problems are Jackson as far as I can tell it's just just my own experience I have no hate for Jackson but they also tend to break AP eyes a lot which is also a problem so once you sort of build up this dependency set there are different use cases where you might want to change things so rich and I sat down and really thought about different things and we just in the last couple weeks added a fourth one but the the first three that we had are pretty good and have served to cover a lot of cases you might just want to add extra dependency so you might have a case where I'm sitting in a project and I want to add a tool right I want to add a test runner to my set of dependencies so you just need to add an extra DEP you might want to override a coordinate and say I'm including closure 1.9 but I want to test and see if closure 110:1 works and so I need to override the version I might want to omit the version entirely and use some default version that's a really good use case that we are not well supporting right now because it's difficult to declare the the library without the coordinate right now so this is an area that I think needs a little bit more thought I think it's a really good use case but we haven't really provided all the tools to get to get the leverage out of it and then the one that we just added recently was replacing the entire dependency set with a new dependency said and that's useful when you have a tool that doesn't depend on the dependencies of your project so I want to run a linter that's just gonna read code and do things but it doesn't need to actually evaluate stuff or something like that I want to so I don't want any of the project dependencies at all I just I just want to use my own dependencies so that's a new one that's out there and then you need to build a class path out of that so that means a class path is just this ordered series of package routes where you're gonna look for classes and clj files and you're just gonna look through them in order in general those class path routes should contain disjoint sets of files they should not overlap at all if they are most of the weird load issues that I see people raise in slack or wherever are places where this has been violated usually without their knowledge so they have accidentally included an uber jar that includes you know a different version of a seal J file or class file form dependency and they included the library and you're not getting and you're getting some of both or something like that or you're including an AO teed version that accidentally includes äôt classes from some other library that you're also including as source files or something like that so in general and there are some tools actually that will let you that will confine these problems there there's some Java tools because this also happens the Java world so there are I can't remember the name of top my head but there are some tools out there that can help detect these problems and they can be really valuable if you have a very large class path where it's difficult to tell where that's happening and then you're going to build a class back which is basically my local plan has my current project plus whatever the dependency paths are of that full transitive dependency set and then there are a couple use cases for class path modification as well so I might want to at the very last second say I know that you're including you know version 1.1 of closure or something like that but I happen at rope closure is kind of a bad example because we äôt include java classes and stuff like that but say I've got some version of the library but I'm actually working on it in development and I want to test some patch to it I might at the very last second one to replace the path that you found in maven and replace it with a pointer to my local path so that's something you can do in with override path you might want to add extra class path paths that's if you're saying you want to run your tests I'm going to include my test source that's an extra class path thing in my class map or I might would just want to replace all of the local paths and that's similar to the replacing all your local depths that's also a capability that's available that's a little less useful than replacing a lot your local devs we have this extensible defense EP career system there are several coordinate types that are defined in right now this is it that we have sort of so we have an extensible systems based on multi methods it's open and all that we do not have a way for you to actually plug into that through clj but if you're using tools depths as a library you can you can plug these things in as desired so the three different coordinate types we have right now or may even get in local those so maven dependencies or an artifact serving you've pulled from a foreign repositories and they always produce maven manifest types so somebody that knows how to go you know go find the dependencies for this particular thing if you have get or local those both end up being files on disk so if you ever get dependency we actually pull it down and cached it and cash the the object directory we also cache the particular commit working directory that you are happen to be looking at the shop for according to the shop so any of those cases you've got files on disk and you need to sort of detect what kind of a project is this it might be a Depp's project that has a DEP seaton or it might be a maven project that's got a pom dead XML and then we also have the special case of a local jar file so this is something that is annoying to deal with at times you might have like a database driver jar or something like that that's not in maven for legal reasons but you know a company's going to give it to you and I need to include it my class path and you can use that through local jar and one of the things we added was the ability to actually will actually look inside the jar and find its pom and actually also include its dependencies so we have the capability there to actually treat it as a intermediate node in the in the depths graph and that was really really irritating to write the code for so the maven api's are not good in this area so some of the different little a lot of the work that we've done over the last two years has been plugging tiny hole after tiny hole to extend what is covered out in the world for these different kinds of dependency Pure's so with maven we have a better plan now for dealing class fires and using classifiers there is still some things around the way that maven classifier selection works that we don't support which are that's another whole story but we support both public and authenticated maven repos we support s3 repos either public or ones that use AWS creds we support maven proxies maven mirrors custom HTTP headers which is used for things like get labs CI and then with get we have both public HTTP and authenticated SSH we don't currently support authenticated HTTP that's one of a cluster of issues that are still underway local wise we support both directories and jars with dependency traversal like I said so that's kind of the scope of what's in tools depths and that's something you can use as a library and there are a bunch of tools now and and different systems that are using tool steps as a library to build class pass which is great I'm glad that we have that it's an option so we also have wrapped around tools depths a program which is called clj or closure and the big thing here is that sort of the belief that builds our programs and and that's different it matches sort of the boot philosophy in a lot of ways it's a little different than lying again or maven philosophy which is really more describing this sort of declared a declarative build I said declarative in quotes anybody who's worked on any sufficiently interesting program knows that eventually that that you're lying in project or maven pom or whatever is going to accumulate profiles and plugins and all sorts of various tools that you might want to use in the course of building your project or developing a project or doing those kinds of things and so we're just put a stake in the ground and say we think builds our programs and and interesting projects end up having interesting build programs eventually so we're looking to start there as having so seal JR closure seal J is a wrapper for closure that just adds interactive stuff so I have seal j / closure here they're really the same the same thing one just has a wrapper for you know read line support that kind of stuff so it's a tool to build project classpass and it uses tools depths do you do that and also to run programs so it combines several different things so this is kind of the scope of clj is the depth chain so a series of multiple DEP seeding files it uses tools depths for to build class pass it has class path caching for performance so once you do the work of computing it we remember it and then it has programa fication by a closure dot main so closure has had a program runner in it since the very beginning call closure about main and so that's sort of heavily leveraged in in closure and it's really just a wrapper for calling that and then there is an alias system for selecting in providing all those modifications that I talked about so the depth chain is an ordered list of DEP studied in files there are really four possible things that might exist and in general its tolerant of having missing versions of any of these there was some DEP seaton file that's in the install there's a DEP seeding that's in your dot closure directory under your home directory and that's sort of your user defaults and that's the ideal place to install tools that you can use across projects the current directory wherever you're in now that is assumed to be your sort of project two directory and so there's expect you to be a DEP seat and file there and then you can actually specify things on the command line with - a steps and so you can actually hard code one right into the command line if you if you want to go go real dynamic and in general the latter ones override the former ones there is a custom custom merge logger to combine all these things together and that's mostly like doing a merge with with merge with some minor deviations for things like paths which are not maps and then there is an alias system and so there are a bunch of different alias types - ours used for mod of occations to the depth resolution process - see is used for modifications to building the classpath the second step - J + - iam are used for adding on JVM ops and main ops and then - a is just sort of catch-all that will actually activate all of those and you might have multiple ones of those or just want to be lazy and not remember that there are four types so most people actually just use - a which is fine and then you can combine multiple aliases together to be activated activated per sort of execution of the clj tool aliases have their own sort of merging system to them and they sort of have custom / / override type merge logic all of the depths related ones all sort of merge with merge so later things override the earlier things paths and extra paths are really about that are really vectors so they basically get concatenated and then there's a distinct done so that you remove any duplicates which are not needed javion ops just get concatenated so they accumulate main ops are a little different in that we only take the last one so basically the last one wins those are not things that generally accumulate well so you get one shot at that basically at specifying a set of options and often those end up being you can also specify them on the command line itself so the idea here is to build sort of a composable tool and I'm not sure how readable that is for everybody but tried to to put this into one picture here you have a bunch of different adepts chained those get merged into some effective depth seaton you have a bunch of aliases that are being specified so everything over here on the left is something you're saying on the command line or is inherent in your environment it's sort of your environmental stuff and then you've actually got args coming in as well all of those things are used to invoke resolved EPS which goes out and talks to procurers which goes out and talks the internet and caches things on your disk somewhere you're gonna make class pass which we also cache and then you're gonna build a class path which ultimately is done to just we're making a java call at the end so there's no magic here you're really just at the end just calling Java with some class path and with some JVM args and with some main arts and so that's really the end result of all this so really we have so but you'll see there's several dimensions of selection and extension in ways that you can combine all these things together either in your DEP seeding file and in terms of which steps eaten files you include in terms of which aliases you specify which activate different things you have different procures that could be getting things from different places so there are a bunch of dimensions here is that is the key and they work together in combination and that was what we were going for and I think it mostly worked out it also has enabled this sort of ecosystem of tools that could work together you can create a configuration that has a bunch of different kinds of tools and Shawn core fields and zerah in the audience has a a def seaton file that is that's gone around made the rounds has a whole bunch of different tools defined in it and that they use internally and so that's a really good example of you know combining all these things together to make a bunch of a toolset that you can use across all your projects or specific projects so you can run a rag a rep hole with your normal project class path that's just your normal clj you can start up your application you can run tests in that case you need to add additional test paths and add your test runner dependencies and invoke the runner and pass additional arguments or you might want to use a linter where you you swap out the depths entirely and then invoke the linter tool and you can do all those things from the same tool and in particular want to highlight that you can define these in your user depth seaton and you can get the same environment across different projects on your system but another key point is that these are just programs so if you go look at these things there they are not in general many of these are not even using tool steps they're you know they're programs that you were in voguing in the context of your project and it can do things in your project and that means that you could go take these programs and you Google use them in line again or use them in boot or use them and make or use them in some other some other place they're not you know they're you're not plugging into a framework you're just writing a program and we provided a way to run it so there's a wiki page out there it's linked from the root of the tool steps github repo that really where people have been great at sort of adding their tools as they build them and this is just the first page of several there are a bunch of different tools out there that can be done and I wanted to do a little shout out to all those people who work on tools depths tools and come talk in slack and and I try to be responsive to those those problems as list of those things and and build things that are that are usable out of all that so I definitely appreciate all these people and and others who I'm certain that I forgot to mention but who are using tools depths in clj and building cool tools and so please keep doing that that's cool some things that we are or I'm still still looking at and working on one thing that I've been actively working on lately she's not quite done yet right now to get access to s3 repos we go through some rapper code that talks to a Lanigan related project for s3 maven wagons which then talks through maven wagons into a framework to maven transporters and it's just this whole mess of stuff and it uses the AWS JDK library so it pulls in all these dependencies and it's doing a lot of work that is really unnecessary so I've been working on a direct s3 transporter that uses the CAC inject ABS API talks directly through HTTP to s3 and you could do that to get to s3 repos and it also I think will allow us to be a little bit better about how we work with issues around Amazon regions and so those kind of things we run into a lot with the current s3 repository stuff so that's an area I think something I've written a bunch of code for and still working on but will happily hopefully get to that we definitely like to get out of being tools depp's alpha and just being tools depths there's a lot of thinking around that and rich and I have spent some time talking about it and it really also kind of it's the same process that we need to go through for spec and you really want to think think about it and do do good good thinking and good work on that and so it's started that process so getting there there are a lot of a lot of the issues that are in the tracker right now are related to get depths in particular we're using jacott and in particular an older version of jacott and that has the that has a particular solution for basically understanding get could get credentials you need some sort of credentials Oracle that can tell you things we don't we don't want those get credentials to be in depths even files we don't want any kind of credentials to be enough seating files we don't really want it to be a thing that we are maintaining or managing at all we want to leverage what's out there and it does have that native git does have some of that stuff Jake it has its own solutions to those things they're newer versions of the gate Jake it have new solutions those things using apache minor so I have spent some time working on this and and I haven't decided exactly yet what needs to be done there but that's an area that I think is definitely ripe for some some work still and that will give us access to things like you know authenticated HTTP and getting access to some of the newer encryption that happens on some of the host keys and different stuff like that there right now I think rich asked me before I did this talk like you know like overall like how are things going with all stuff you know and and I think that clj has been really good for getting started like it's been it's made it really easy to just get started working it get a rep ole get to use some tools and do some basic things and there are tools out there for doing things like packaging deployment that exists that is an area where I don't think we have done as good a job as sort of corralling what all the options are and making a good canonical path for people to do things like I just want to make a project and upload it to pull jars or whatever so that's an area that I think and I don't know exactly what's needed there there's a lot of things that exist already so I'm not sure whether it's making a new thing or just making the things that are there better or better documented or what it is exactly but that's an area that I think could use some more attention and definitely will like we'd like to do that in the coming months and I mentioned default depths earlier is an area where I think we could do some more thinking in that area get some more get some more leverage one more thing I'm gonna pull of Steve Jobs if you liked all those def graphs I showed you today I released a new tool to make them today so there's a tool steps graph out there now as of this morning it can make def graphs it can also make those sort of expansion graphs that have the green in the red those are very interesting for me because it allows me to trace the process of tracing through and where it makes all the decisions basically and so but it will generate one grunt one graph forever a PNG for every step of the expansion so some cases that's 200 graphs or whatever but for me as I found out to be really useful and looking through and understanding places where decisions were made and things like that and I think there's some more ideas there things to do there but so there are both the you can if you just use it by default it'll show it to you it'll just pop up with your or you can output it to a PNG or you can use the trace mode which will output many PNG s right now and then the other thing to mention is that the current version of clj has a new option called - s trace that will emit a file called trace Eden which is a text file an Eden file that has a description of every step of that expansion and the decision I made and why I made that decision so same things that are shown up on the graphs which I think is a lot better for understanding why something happened than the old secret verbose mode that I've used to debug stuff in the past and so this can actually take a trace file so if somebody has an issue and they can you can omit a trace file and send it to me I can work from that so you can do that as well that's it have fun [Applause]