We can't find the internet

Attempting to reconnect

Something went wrong!

Attempting to reconnect

Analysis Summary

Performed authenticity

The deliberate construction of "realness" — confessional tone, casual filming, strategic vulnerability — designed to lower your guard. When someone appears unpolished and honest, you evaluate their claims less critically. The spontaneity is rehearsed.

Goffman's dramaturgy (1959); Audrezet et al. (2020) on performed authenticity

Worth Noting

Positive elements

- This video provides a clear, high-level rationale for why Clojure's immutability and data-centric design align well with the requirements of Apache Kafka and distributed systems.

Be Aware

Cautionary elements

- The use of 'competitive advantage' and 'crushing enemies' rhetoric transforms a technical tool choice into a high-stakes emotional conflict to drive adoption.

Influence Dimensions

How are these scored?About this analysis

Knowing about these techniques makes them visible, not powerless. The ones that work best on you are the ones that match beliefs you already hold.

This analysis is a tool for your own thinking — what you do with it is up to you.

Related content covering similar topics.

Datomic Cloud - Datoms

ClojureTV

The Taming of the Deftype Baishampayan Ghose

Zhang Jian

Exercism Summer of Sexp - solving challenges with Clojure

Practicalli

Composable Tools - Alex Miller

ClojureTV

Understanding Core Clojure Functions Jonathan Graham

Zhang Jian

Transcript

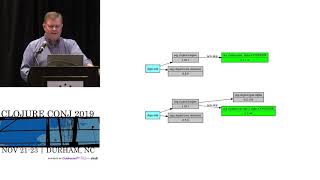

I'm really excited to introduce our first speaker he's the founder and CEO of operator and has spoken here the Cange in the past and he's a great fan of closure and so it's my pleasure to introduce territory West got a good morning thank you for having me at the plunge to speak about something that I'm really pretty passionate about closure and building things with closure as Alex mentioned I run a company called operator and we build the very best tooling in the world for Apache Kaka and we built that tooling in closure and closure scripts and we have quite a lot of success in how we build it and we think closures are phenomenal language for product development hence a talk follow the data I'd like you to keep that exhortation in mind as we run through the slides product development in closure now I have something slightly awkward to do before we start because I was talking to my wife about coming to America and how do you all feel about a little bit of hooting and hollering is that something yeah okay all right thank goodness for that because I'm quite committed to it I've got it's actually a slide that I've got in there so um My partner and CEO Kylie in our company she has a big experience and event management and we have an enormous respect for the people that put on events like this they organize them Alex Miller Kim foster have done a phenomenal job in getting us all to this point thank you thank you thank you but just don't Americans calm calm be calm yourselves there's a slide right so one second and also um the event crew here at at Dhahran Convention Center who are going to take us through the next two and a half days so if I were to ask you everybody at the closure conch how excited are you to be kicking off this year's cons you would respond [Applause] that's not too bad it's about the equivalent of telling a primary school in Australia that they can play cricket at lunchtime so that's you've done you've done okay so what do I want to talk to you about today I want to start well I want to roll through five things basically I want to start with a question that we often hear in our community what is closure for right bit start with a really big question for the first second slide of the closure College secondly I want to introduce you to something that we think is pretty interesting what is changing in our world and I would strictly I mean the world of software development not your political system which seems to be exploding at the moment I didn't touch it it had literally ice I just flew in a few days ago it had nothing to do with me why is that change exciting for closure what problem does our product solve which is related to that that change and then let's enumerate some of the reasons that closure makes operator really great the things I want you to take away from today as some shared enthusiasm I know we've got some people at the Conch who are really deeply experienced in delivering stuff with closure and a lot of things I'm going to talk about that's probably occurred to you but we've also got some people at the cons who are new to this world and they kind of want to understand what they can get out of closure and and for me I stumbled on closure eight years ago after more than a decade of enterprise Java development and the thing that I got from closure personally the most was just a real kick in the pants and I needed it because I had lost my curiosity at that time and closure returned that to me and I'll be forever grateful for that so I want to share some of that enthusiasm with you today and some shared experience because as a small business owner the thing that closure gives us that's the single most important thing is a competitive advantage and I want you to remember that when you're trying to talk to your colleagues in your organizations and convince them that they should use this wonderful data oriented Lisp that runs on the JVM you're going to run into a brick wall at some point and when you run into that brick wall you should just out-compete them because isn't that what we all really want to see enemies driven before us synth hear the wails and lamentations of their programmers it's not just me okay so keep that in mind it's not all about the joy of closure right it should be some aspect of the fear of your competitors using closure and your not being able to so let's start with a big big big big question what is closure for all I would like to answer that question for you but this year someone has been living their best life on Twitter and they have answered it better than I possibly ever could I'd like to introduce you to mr. Stuart Holloway if you haven't heard from him before he says I use closure because the fastest way to implement non-trivial projects in Java is to create closure and then use it it's bit bonkers isn't it really the thing that really I find impressive about that tweet is that I'm not actually even sure that he's joking I think he's serious my team we don't have the ability to create languages like closure we don't have to fortunately the great team at Cobra Tech steward that language for us but other than that I I have a real affinity with what Stuart says there because in my career so far 20 years of building products for companies I can honestly say that every single system that I have ever built would have been better off being built in closure and you know that's a bit of a vacuous statement to make really so let's drill down and figure out why let's ask another really big question we're going to get into metaphysics shortly and then I'll just float away in a little cloud what's our purpose well my purpose sadly when I'm sitting at the computer I'm not creating art which is probably what I'd like to do I'm not playing games which is probably what's I'd like to waste my time doing predominantly my career has been focused on building products that bottom right-hand corner of the selection of things that I could spend my time doing and I've built products for companies all around the world and generally there's a singular focus to the products that we build we take some data we filter it we transform it and we put it somewhere and I'm quite comfortable telling you that that's every simple system that I've ever built now it's true that it's really hard to get developers to focus on just building products because they're always gonna try and build a framework right they're always going to try and build a language that's what we want to do with creative expressive people we don't want to just build a system that sells widgets and pulls data out of somewhere and moves it somewhere else but really the focus of everything that we've done over the years is building products and there's a big lie that's told to us when we're starting to learn our careers in software development and that lie is that there's one way of solving these problems of maine's and it's object-oriented design and so as a young developer learning Java I came up trying to solve data oriented problems with object-oriented design and you can do that if you want to that's fine it's not the most effective way of building products but I think it's a little confusing because it is probably the best way of building frameworks where you're trying to capture some ethereal abstract idea and give it some concretions and throw it over the wall so that people can use I have no idea what you would use to build languages but my career has all been about shifting data around and that's why I think I love closure so much because there's a real affinity with this data oriented language that thrives on the JVM and in the browser and I think mostly that's your careers as well it certainly seems to be because this is the response to the this year's closure survey and lots and lots of us work in financial services and enterprise software I've rolled them up into one category really and what is that if not managing data while having some concerns about security and other things so that brings me to the big field I really am sorry for this this slide huh the big question what's changing in our software industry well there's a sea change in the enterprise I think don't let this be the lonely it's two and a half days of my life after this I'm really trying very hard there's a sea change in the enterprise and it just happens actually there's this sea change is in exactly my companies expertise so this is enterprise architecture right this is the standard architecture for how things are built for mid-level companies around the world there's a data base there's a java application running it's got a UI front-end to it and that's how software has been built and the the predominance of software in the enterprise looks exactly like that might not have been built this year but you know though companies carrying around software that have been in existence for 15 20 years all through Durham and all throughout the world but there's a problem you see because enterprise concerns are changing whereas previously the enterprise was probably really really predominantly concerned with security first and other sorts of constraints every company that I talked to in Melbourne today is concerned about real time scale and availability and it might just be but because all of these companies come and talk to us because it's ask particular niche in this field in our small industry in Melbourne but we're run off our feet by companies that have that sort of architecture and it's not real-time it doesn't scale and it's not highly available and they want to know how they bridge that gap how that how do they get to the same product delivery but well let's put some meat on these bones real-time has some meanings for actual engineering and none of them are particularly important in this case real-time just means that it's got some sense of happening in the now and to give you an example if you're a startup bank and you're you've got a customer onboarding process there's no point telling me if my to join the bank was successful tomorrow because in the time it's taken you to batch process that I have signed up with your competitor but a twenty dollar bonus bought myself a copy coffee and deleted your app so it's a really a matter of life and death for companies today where those sorts of systems would have been built in a batch oriented way previously scale is a concern for every enterprise that we talk to simply because they have so much more data than they ever had in the past and they don't know how to how to deal with that ingress of data at volumes higher than they've seen before and then availability is a pressing concern for the same competitive reasons as real-time really you have to be always on you had to always facilitate your customers demands and also there's a slightly different aspect to availability in that you should have a convincing story for how you're going to recover when things do inevitably go wrong and it turns out that an immutable time series compute is a really really really good way of building these systems and that really means distributed compute so the enterprise is moving towards distributed compute for bog-standard product building all around the world as far as I can tell and what is the very best way of delivering distributed compute on the JVM with a standard Java mindset well luckily there's a wonderful language that is fit perfectly for distribution and distribution is the essence of how we achieve real time scale and availability it runs on the JVM but it isn't Java it has immutability at its heart because immutability is absolutely key for distribution it's a functional data oriented language it works with sequences and maps it's a dynamic language it cares very little for types we find when we're building systems in this language that it's really good to separate side affecting from pure functions and to push your side affecting functions to the edge you can create really sophisticated systems from very simple tooling supports interrupts with multiple host environments took two and a half years to progress into an open-source project I've probably labored this gag a little bit too long it's released in 2009 and you've probably all guessed its Apache Kafka some of you didn't get rich icky new I think he knew when he released a closure um so this is the thing about Kafka is that commonly everyone knows that as a message broker but it really isn't it's a programmatic model for building real time scaleable available distributed compute on the JVM and Jake reps who was at LinkedIn who writes a bunch of phenomenal articles as a CEO of confluent now I couldn't find the quote unfortunately for this talk but I'm certain he said that their purpose when they built Kafka the message broker was to build a great distributed distributed immutable log that they could then leverage for distributed compute because this is a real necessity in the world that we see today and so Apache Kafka comes with a whole bunch of constraints that look wonderfully closure ich don't they really and it's kind of quite wonderful to me that Java developers all around the world grabbing the Kafka streams library which really does support all of these concerns and constraints plugging it in and building systems that represent some of the best ideas in closure but without really realizing it and that represents a massive opportunity for closure and that's what I wanted to impress upon you today that the enterprise is changing the way that we build systems is changing we are moving away from mutable data stores with lossy systems and we are moving towards immutable time series compute and maybe it's Kafka that really pushes that out for companies around the world maybe not but I believe that all of those constraints and all those big bold ideas that have been expressed through closure through the years will be representative in any of these systems because some of those key things about immutability functional composition they're really necessary for distribution so you have a choice today when you're building these modern systems for the enterprise you can pair a framework like Kafka that's inherently data oriented with a programming language like Java that is inherently object-oriented if you want to but you'll get much better results if you use a data oriented language and it's not just because there are some shallow considerin C's between the framework and the language it really is that distributed systems don't care about your domain model they don't care what your conception of your data is they don't care what concretions and constructions you build on top of the raw truth of your data coming into your system they only care about how your data is located data locality data temporality data durability data partitioning that's what Kafka cares about it's the same story with Apache Cassandra and any other distributed system that gives you real time scale and availability so it might sound a few people out there to hear this but if you're plugging an ORM tool into your application today to write some data into Kafka or Cassandra you're most likely doing it wrong because you need to orient yourself differently and inherently you need to understand that the products that you're building are data oriented products and what you really want is a fantastic data oriented language also we found anyway so this is the fulcrum of how Kafka provides distributed compute to you it's this is called the consume transform produce idiom and it's the idea that you can pull data off a topic which is basically a sequence and immutable distributed sequence in in Kafka you can process them one message at a time and you can put on to another topic it's an input a computation and an output and that just looks like a function to me might be a pure function might be a side affecting function that's the essence of functional composition we start with this very basic element then Kafka streams or the Kafka processor API starts to decorate and grow the capabilities of the library based on that so they provide you functions to map filter reduce of aggregates and you end up building systems that are really topologies of computation that communicate with one another through [Music] unbounded buffers which I'm sure doesn't give anyone any concerns and that's how we distribute and that's how we get no single point of failure and that's really the essence of distributed computer via Kafka on the JVM today so we take a problem that looks like this is architecture problem and we solve it by having a brand new architecture that looks like that and every senior developer in the room will realize I used to have a problem and now I don't have any problems at all that's wonderful that is and we haven't even really I could you know I made these slides last night quite later didn't even have time to put in there the essence and depth of did I mention that they're perfectly unbounded limitless buffers oh dear so but however I should say that architecture for distribution does hold up it does what it says on the tin my company were working with a client years ago that was processing email then Yahoo blew up like the Deathstar there's a technical financial term for their demise I splintered and the company that I was working with we built some systems for all of a sudden had three orders of magnitude more data to deal with and we had built those systems after closure Cassandra and patchy storm at the time which we don't use anymore and how do we resolve that issue of three order magnitude growth in data within a nine-month period there was some engineering to be done we added two Kafka machines to store machines and about 20 Cassandra boxes because the architecture does stand up so this is where operator comes in and would like to give you a little bit of a demo because operator comes in with it seeks to solve this problem for developers in this space you build distributed streaming compute with Pafko you throw your applications out into the wild and all of a sudden you have problems that you didn't have before you have things you have to monitor that you didn't have to monitor before and that's problematic what we used to do early on for many years we would bundle something together with sticks and twigs maybe Rieman maybe some monitoring and we would get some observability over these systems but our clients have progressed beyond that now we can't do that anymore we need a specialized tool so we built it and I'm just going to show you what it looks like in fact it may already be running here it is I won't make it fullscreen because I'm gonna be flipping around between some different odds and ends so this is operator and there's something interesting that you might notice from that very first line you'll connected to a simulated Apache kapha cluster it's running in your browser so let's have a look and see what that looks like and we'll come back on touch on that in a few minutes operator is a tool that sits close to Apache Kafka it's written in closure and closure script like I mentioned earlier it's really a straight value add by developers for developers in this space it's just a single jar you spin it up and it starts snapshot in the cluster comparing snapshots progressively computing all sorts of metrics showing the raw snapshot data to you we seek to just give you every single piece of data about your running system your consumption of data off of Kafka and everything in between that we can in a nice little UI that some of these graphs won't mean a lot a lot to many people in the room but you can do things like pop in look at a topic look at some data transit is a first class data serialization format in operator for reasons that will become very obvious shortly we can drill down into brokers which is one dimension we can look at topics groups there's just a plethora of data in here that we've surfaced up in this tool the most important thing in groups this is where we look to see how are unbounded queues are operating the problem with Kafka's own internal telemetry is there's not enough of it so this telemetry we don't use any of Kafka's JMX metrics we compute all of our own we snapshot it because we've got a wonderful data oriented language that allows us to select that facilitates us to do that so we can create Salama tree where we look at the lag of a consumer group by the group itself we can look at it by the hosts that are hosting that group by the topics that that group may be trying to consume from by the brokers that are hosting the topics that that group is trying to consume from because in reality these are all of the dimensions that we need to know to figure out how our system is operating and that would have saved me three days of time with client at Christmas which is why we built we built operator and I'd like to share with you now a five advantages of closure for building operator number one data Phi it's more about the ethos than anything else I think data Phi if you're not aware of it is it's a protocol that is recently released data file in closure 10 I think and really it's just a protocol with no implementation that's meant to be for people to grab if they're building tools I think but the intent of it is that you can pass an object to data Phi and it will turn it into data and if that doesn't capture the very best of closure I'm not sure what does and in our example here I've got a function called list topic so when you start operator it starts up in a Java jar or a docker container and it immediately starts snapshot in your cluster in in a set interval and it computes about it retrieves about a dozen different snapshots but those snapshots come back to us from the close of the Java API that we're calling as Java objects and I yeah I don't want Java objects they're no good to me this is data what I'm looking to do is filter map find the frequencies use all of closures core data functions on that data to do cool stuff to create a product so the first thing that we do at the boundary of our system as we data fight everything and I'd like to show you a little example of what that looks like and to do that first off I'm going to have to I am going to have to start a a three node Kafka cluster running in docker on my machine one take a minute talk amongst yourselves or something I think and at the same time what I'm going to do is I'm going to get into intelligence of and I'm going to open a ripple and you're going to see just how difficult it is how's that font size is that okay everyone can see that fine we're gonna start um operator running that looks like it's just about started to me so let's start operator it's quite straightforward really it's got um one thing I do want to point out it's got a nice little bit of a ski out there when you started on you know a child of the 80s can't help myself really it tells us it's really seventeen started a local but look on localhost three thousand it's connected to a particular calf the cluster and what you can see down here straightaway it starts snapshotting it starts pulling out of that calf to cluster stuff that's really valuable to us that we want to display so I'm let's have a look at what that system looks like there it is it's an integral we use integral not component because integrand is the very best method for raising data into system state in in the closure world today and that system actually has a admin client on it looks a bit like that it's a nice side java object so i'm going to use that admin client to list topics which is the function that i just showed you and they're there so I've made a call to act after cluster via a Java Interop I pulled back a very very very short object hierarchy at this point I've converted into data which is what I want do you know what we do with that data we put it straight onto Kafka because we build immutable time series compute and then we have some streaming compute that I'll show you in a moment that computes and Mondays over all of that data and creates exactly the output that we need so operator itself is a linearly scalable highly available distributed system that doesn't have anything to it other than closure and Kafka the actual implementation of data file for our system is a little bit broader than just that one element that I show you here it's literally every single thing that we could possibly be pulling back from Kafka every interaction that we do at a boundary of our system with pafter we immediately drop into data because that's what we want that's what our systems live and breathe and there's a real value to going straight to the data and we find simulation to be absolutely categorically key for us you might have noticed because I told you explicitly that this is a what I call a backless version of operator I don't really know what the term is operator really has two parts to it the front end and back end it's really super complicated but we run operator without the back end because the back end does the stuff where it pulls in data from Kafka and then it computes over it and sends it to the front end so in this version we just pretend that we'd pulled stuff in from Kafka and we compute over it and we send it to the front end actually we don't send it to the front end you see because they say ljc files but that's about two slides away so give me a second and that allows us as a company that builds products to have an entirely simulated version of our products that is 100% legitimate and this is I'm using a Lonnegan alias here to run it with big wheel thank you very much Bruce Allen for your hard work and that's great for doing live development on the front end of our closure products without having to plug the back end in which I actually actually have plugged in anyway I'm running them both at the same time right now I can look at localize 3000 and there's my actual running as if you downloaded it from docker hub operator running against the dock raised Catholic luster and I can do exactly the same things and I can look in my metrics topic and this time it's not doing anything generated it's actually pulling that data out of Kafka but you would never know right because why would you know that was we just generate that data and that generating that data is not particularly complicated it looks a bit like this there you go that's all of the possible state that the Kafka cluster of a particular version could generate for you and the great thing about having that facility to generate that is recently when I sent operator over to a wonderful team in London who plugged it into a cluster to do some evaluation of our product they're very kind and they came back to me and they said day later they said look Derek this is pretty good mate it's pretty good we like it but some look the front ends a bit laggy isn't it really and I thought to myself was not really actually I think it's pretty bloody good but I said Chuck me a snapshot of something give me some stats what are you looking at and it sent me a screenshot and it just turns out that their cluster was 10 times bigger than anything that I had anticipated anyone plugging it into this month they had over 500 topics and 10 billion messages and you know what the the front end was a little bit laggy at that scale to be honest but but how did I know that well you see I went into generate which powers the generator at front-end you're looking at and I changed the number of brokers to 50 and I changed the number of crew to 100 change the number of topics to 505 and that generates about 10 billion messages and then I had a look at it and I thought it's not too good actually mate and then I fixed it and sent it back to them and when I sent it to them I knew what they come back with and they did come back and said thanks very much mate that's way better now it's pretty good this closure stuff everybody for a small company that's trying to knock it out of the park I'm not even joking it's pretty good stuff isn't it really give up on object oriented design everybody just it was always a load of old bollocks Sophie so advantage number three and I've got to be careful of time because I'm going to run out of it rapidly closure closure scripts on sale Jesse I cannot underestimate just how valuable it is to have a phenomenal data oriented language that works in the JVM and in the browser for a product delivery company that problem I just described to you how did I solve it I moved some functions from the browser to it from the browser to the front end I filtered some data early and then I changed the front end to pull rather than have everything pushed at it which was naively what we were doing to start off with and actually I didn't even have to move the functions because we have come up with a bit of a strategy and asus in our world for if you're going to be site affecting in the JVM write a closure file if you're going to be site affecting you in the browser write a closure script file and if you're not doing either of those things it's a pure function and it may as well be SCO JC file because you don't know where you want to pull it from I didn't know that we were going to be running generated full front-end for Apache Kafka in the browser when we started on the project I just had to do a demo and I turned around to my colleague me.how and I said mate you reckon you could knock that together and then I went out and had a nice coffee and then I came back and he pretty much knocked it together so you know it's good it's good when you've you know got these big broad ideas thanks to Sierra for telling me to separate my thunder of my functions at some point many years ago now here's a really good advantage for you this one's the absolute killer transit my goodness gracious me what happens to our data this is called follow the data right what happens to the life of data in operator we periodically snapshot it you can see my little cluster here still it's just wildly wailing at that clusters it's wonderful as soon as we get that data we check it onto a topic later on we consume that we compute it in a streaming computer and I'll show you a code example because I am gonna have time for that and we put it into a K table then we consume it again and put it back onto a topic then we consume it off that topic and we send it to the browser we receive it in the browser and with reframe we update some states we generate some graphs and we show it to you and sent a that we use for doing the web sockets and Kafka both support configurable serialization and deserialization and we just say that's transit mate and then we just forget about it we're just working in closure data structures everywhere and that's an enormous incidental complexity which has just taken off of our plate it's really great and they're number five my last phenomenal reason to use closure for product development is just straight interrupt this is one example of interrupts but this is a streaming computer apology that computes metrics inside operator and it's really I'm glad we're a bootstrapped company because otherwise someone would probably complain about me just showing you that code you're not allowed to write it down all right you've gotta forget it and it's basically just Java right but it's very closure as Java and it's very accessible to closure and I just can't understand ur self analysis inity between closure and the JVM and the browser for building these products it's just given us an enormous leverage to get something to market so I want to give you something that you can take away and do some really cool stuff with so it's not there yet because I was panicking about this talk last night until very early but we're going to open source our template project for doing work like operator it's just called operator io / shortcuts and it's those technologies which are the backbone of what we do to build products like ours now the question that comes often to our community is what frameworks do you use and the truth is we don't use any we just have a bit of closure glue that plugs them all together so we'll just um open-source that as well and you can see how we've plugged it together and you're welcome to take that and just use it to your heart's content so to recap what is closure for well Stuart Holloway tells us and is absolutely correct is for making stuff but better than that it's making products for enterprises and better better than that is for making real-time scalable available products for enterprises and that's what's changing about our world is an enormous exciting opportunity and I hope you'll all agree at the end of this talk that you understand that closure can be the heart of that change and that's very very very exciting for closure how good is operator though it's pretty good and it's really powered by all of those fundamentals simple great ideas that we took from closure and I just want to leave you with the thought that it's okay to build systems with data and functions first you don't actually have to set above that operator has precisely zero protocols new protocols to find there was one called serializable and then data fireball came along and made me realize that I it was a much better name so we've deleted it use data fine stead there are no def records because we use integrins we don't slack protocols on def records to make things seem official do we everybody know we there's no def types because i've never used one but i've never used the 4-iron in my golf bag either i'll keep it there might need it one day and it's just data and functions and it's a sophisticated system that we're really proud of so i'd like to say thank you all for having me here to talk to you today thank you to the team at cognitive for your stewardship of this language and for giving it to us thank you to my wonderful wife carly for being the CEO inspiration a bit of a genius behind our companies and thank you to my two beautiful kids for being wonderful I'll see you in a few days thanks and I'm come and say hello I'm here by myself so don't leave me out there [Applause]

Video description

Enterprise Development is increasingly turning toward streaming compute to meet the demands of scalability, availability, and real-time processing. We developed OPERATR, a tool that helps development teams navigate the complexities of building streaming compute with Apache Kafka. It is built entirely in Clojure and Clojurescript, and in true Clojure style is more like a library than a platform. I’ll talk about why I think Clojure is a superb fit for product development, and we’ll discover the advantages of Clojure as we live-code data from Kafka through the JVM, and into the browser. After this talk I hope you’ll agree that streaming compute is the future, and Clojure is its heart.